Object segmentation in videos is a crucial aspect of computer vision that focuses on identifying and separating specific objects from the background in a video sequence. This process involves labelling each pixel in the video frame, distinguishing between the objects and the surrounding environment.

What does it do?

SAM 2 is the first unified model that can perform object segmentation in both images and videos. It offers a range of input prompts - including clicks, boxes, or masks, allowing users to select objects of interest with ease. It’s zero-shot performance enables it to segment even objects, images, and videos that were not part of its training data, making it highly versatile in its applicability to real-world scenarios.

Its most impressive feature is its ability to track objects across video frames, even if these objects temporarily disappear from view. This is made possible by the model's “per-session memory module”, which captures information about the target object throughout the video sequence. Additionally, SAM 2 supports the ability to refine mask predictions based on additional prompts on any frame, ensuring accurate and precise object segmentation.

SAM 2's streaming architecture, which processes video frames one at a time, enables efficient and real-time video processing. This allows for interactive applications that can take advantage of the model's capabilities in real-time.

How was this model trained?

To train SAM 2, Meta AI developed a large and diverse dataset of videos and masklets (object masks over time), created by applying SAM 2 interactively in a model-in-the-loop data engine. This dataset, known as the Segment Anything Video Dataset (SA-V), has also been open-sourced along with the pretrained SAM 2 model, a demo, and code, enabling the research community to build upon this work and accelerate progress in visual segmentation tasks.

Where can this model / technology be used?

Video Editing and Content CreationFilmmakers & YouTubers can utilize SAM 2 to track multiple objects within a video, and apply various effects, such as blurring backgrounds while keeping specific subjects in focus. The video object segmentation outputs from SAM 2 could serve as input to other

AI systems, such as modern video generation models, to enable precise editing capabilities. The ability to segment and manipulate objects in real-time provides creators with powerful tools to enhance their storytelling capabilities.

Data Annotation / Labelling

The model can quickly and accurately annotate specific objects in images and videos, which is essential for creating specialized datasets. For example, if a researcher needs to annotate images of clothing, SAM 2 can isolate a T-shirt while excluding the model's head, streamlining the process of generating tailored training data. This efficiency is invaluable in fields like machine learning, where high-quality annotated datasets are crucial for training models effectively.

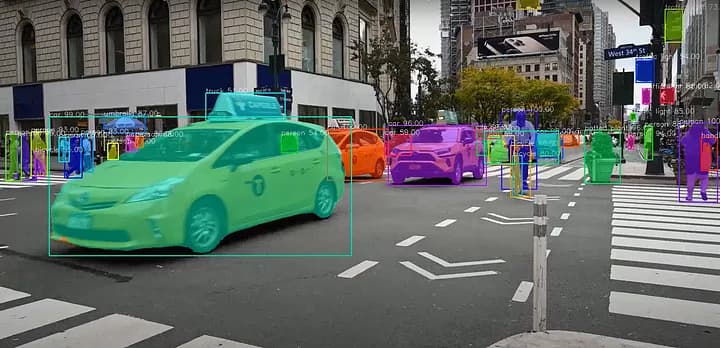

Autonomous Vehicles

Autonomous vehicles require precise identification of various objects, such as pedestrians, other vehicles, and road signs, to navigate safely. SAM 2's real-time object detection ability to segment and track these objects across video frames enhances the vehicle's understanding of its surroundings, improving safety and efficiency in self-driving technology.

Augmented Reality (AR) Applications

SAM 2 can identify and track objects in real-time, enabling immersive experiences in the world of Augmented Reality. For example, AR applications can use SAM 2 to overlay digital content onto physical objects accurately. This capability can enhance gaming experiences, educational tools, and retail applications, allowing users to interact with virtual elements seamlessly integrated into their real-world environment.

Medical Imaging

In healthcare, SAM 2 can assist in analysing medical imaging sequences, such as MRI or CT scans. The model's ability to segment different anatomical structures or abnormalities in videos, can aid radiologists in diagnosing conditions more accurately. By automating parts of the analysis process, SAM 2 can help reduce human error and improve patient outcomes.

Scientific Research

Researchers in various scientific fields can leverage SAM 2 for tracking and analysing objects in scientific videos. For example, in biology, the model can be used to monitor cell movement in microscopy videos, providing insights into cellular behaviour and interactions. This capability can accelerate research and enhance the understanding of complex biological processes.

Do you see more use-cases for this technology specific to your work domain? Get in touch with us today to discuss how we can help integrate such AI models and tools into your existing workflows and processes, to help improve productivity and efficiency.